Compliance

Fair Workweek Laws Explained: A Guide for Employers [2026]fair workweek, labor compliance, predictive scheduling

Payroll

Simplifying Payroll for New Hires (and How Workforce.com Makes it Easy)HR Payroll Systems, onboarding, payroll software, time tracking

Staffing Management

5 sneaky ways employees commit time theft (how to stop it)buddy punching, employee time theft, time and attendance, time theft, timesheets

Legal

Severance pay & final paycheck laws by state (2026)Summary There are no state or federal laws regarding severance pay. Organizations might...

Technology

Global FEC Market Projected to Top $80B by 2033—What it Means for OperatorsAs operators invest in new attractions and guest experiences, it’s just as important to...

Staffing Management

Rest & Lunch Break Laws by State (2026 Update)Summary Federal law does not require meal or rest breaks. – More Some states have laws ...

Compliance

Jury duty laws in every US state (2026)Federal law doesn’t require employers to provide employees leave, compensation, or bene...

Compliance

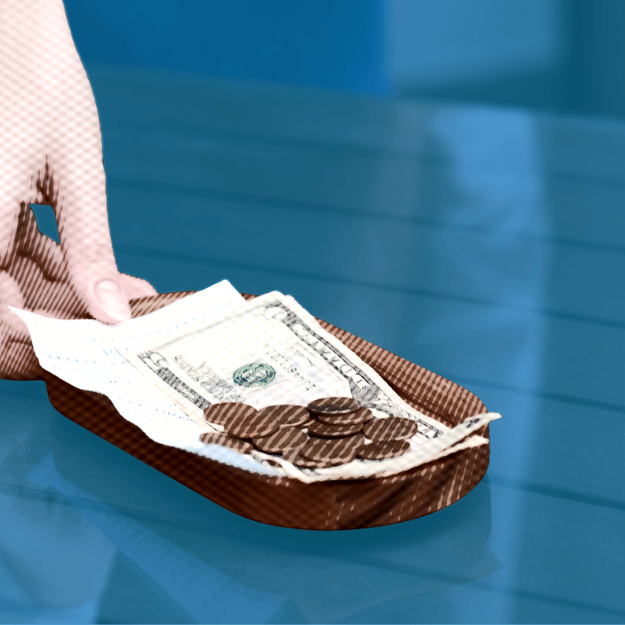

Minimum Wage by State (2026)Summary More than 20 states are raising their minimum wages in 2026, with many increase...

Compliance

Overtime Pay Laws | States + Federal (2026 Update)Summary If you are in charge of hourly employees, it’s likely that there will be days,...

Time and Attendance

How Time Tracking Systems Help (or Hurt) Wage ComplianceThe most expensive wage claims don’t always come from missed punches. Sometimes, they c...

Technology

A Year of Listening, Learning, and Building at Workforce.comAs 2025 comes to a close, we’re taking a moment to look back on some of the highlights ...